Insights on CAD/CAM, CNC Machines, and Industrial Robots

The Evolution of CAM Systems in Machining: A Global Historical Perspective

- Andrew Lovygin

Introduction

Computer-Aided Manufacturing (CAM) for machining has transformed how we make everything from aircraft components to consumer products. CAM refers to using computers to plan, simulate, and control manufacturing processes - especially the toolpaths that guide machine tools. Its development spans over seven decades and reflects a rich interplay of technological innovation, industrial needs, and geopolitical forces. From its roots in Numerical Control (NC) in the 1950s to today’s cloud-powered platforms, CAM’s story involves pioneering countries (the USA and USSR to Germany, Japan, the UK, and beyond), key national programs and university labs, iconic companies and software, and dramatic shifts in computing and manufacturing technology. This article traces that history in detail – highlighting early milestones, major corporate players of the 1960s–1990s, the fate of Cold War-era systems, advances like 3D and multi-axis machining, and the influence of global events (such as the Space Race, the rise of Japan’s industry, and the end of the Cold War).We will see how CAM evolved from punch cards and mainframes to PC-based software and now cloud solutions, and how its role expanded across industries – from milling bomber parts in the 1950s to streamlining automotive production in the 1980s and beyond. As one expert noted, “Numerical control marked the beginning of the second industrial revolution and the advent of an age in which the control of machines and industrial processes would pass from imprecise craft to exact science.” Today’s CAM systems are the heirs of that revolution, with much of their lineage tracing back to a few visionary projects and individuals. In fact, it’s estimated that “70% of all 3D mechanical CAD/CAM systems can trace their roots” to one early pioneer’s original code - a testament to the lasting impact of the field’s founders.

This extensive historical review is organized by era and theme. We’ll begin with the origins of NC and CAM in the 1950s, then explore the expansion during the 1960s–70s (driven by Cold War competition and industrial demand), the rise of commercial CAM software in the 1980s, and the wave of consolidation and technological leaps in the 1990s. Along the way, we will spotlight the contributions and interactions of different countries, detail the stories of notable companies (from early names like Numerical Control Inc. and IBM to stalwarts like FANUC and Unigraphics/NX), and examine how technologies (e.g. 2D to 3D, 5-axis machining, Windows OS, parametric modeling) and global economics shaped the CAM landscape.

Let’s dive into the journey of CAM in machining - a journey of innovation, competition, and transformation.

Early Pioneers: NC and the Birth of CAM (1940s–1950s)

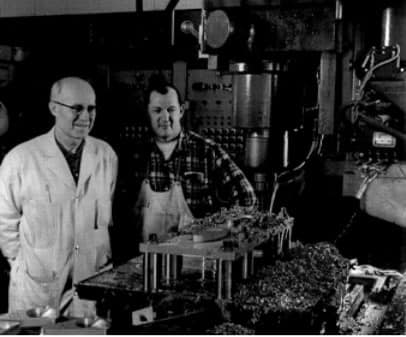

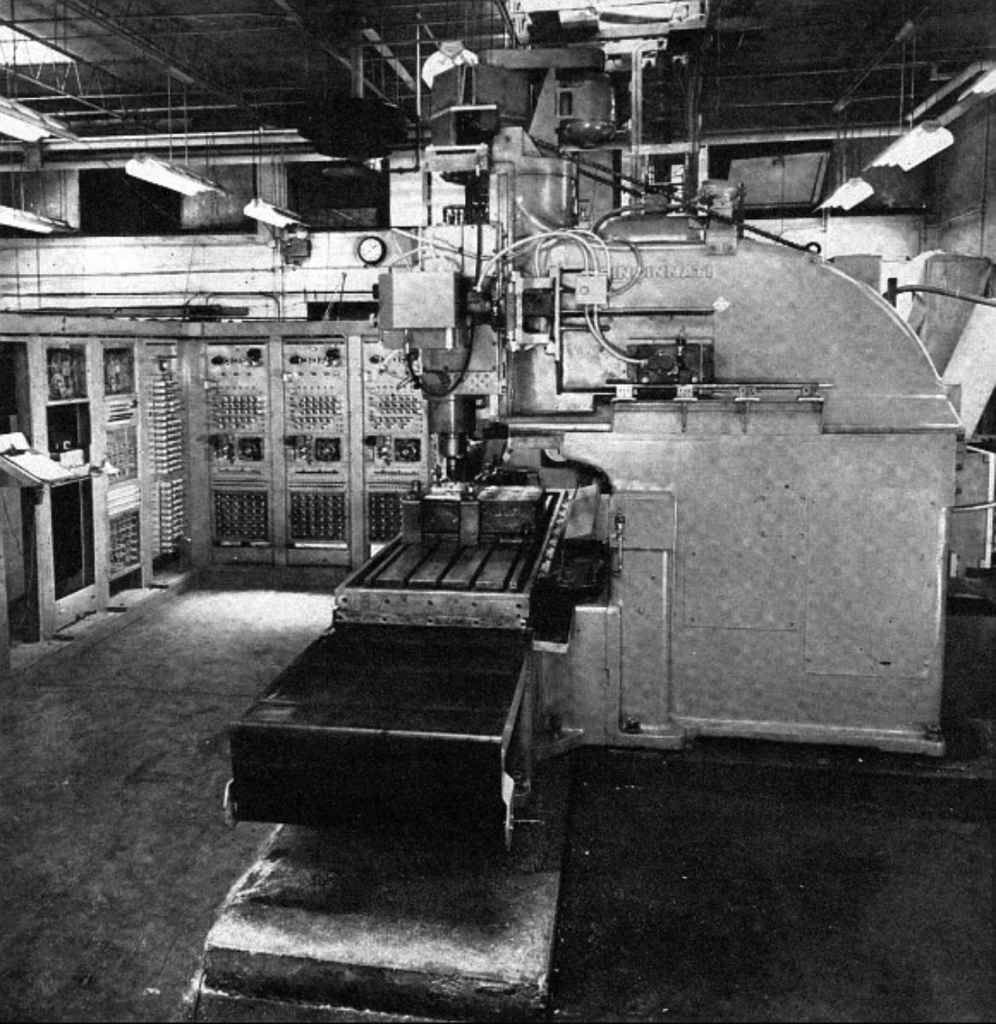

The concept that would become CAM began with numerical control (NC) - using encoded numeric instructions to control machine tools - in the late 1940s and 1950s. In the United States, the birth of NC is often credited to inventor John T. Parsons, who in 1949 proposed automating a milling machine using punched cards to guide the cutter. Working with engineer Frank L. Stulen, Parsons developed a method to calculate coordinates for complex aircraft parts (like curved wing ribs) and feed them to machine tools. The U.S. Air Force, seeking to improve manufacturing of jet aircraft components during the early Cold War, funded this visionary idea. The project was contracted to the MIT Servomechanisms Laboratory - a leading research group in control systems. By 1952, MIT demonstrated the world’s first numerically controlled machine tool: a retrofitted 3-axis Cincinnati Hydro-Tel milling machine that followed instructions from a perforated paper tape. This landmark prototype, shown below, moved a cutting tool in three dimensions based on coded input, achieving precision unattainable by manual machining at the time.

John T. Parsons (The person on the left in the photo).

Although rudimentary by modern standards, MIT’s 1952 NC milling machine was an “outstanding success by any technical measure,” quickly and accurately making complex cuts that were previously impractical. It used 7-track punched tape as input and hundreds of vacuum tubes in the controller - essentially creating a programmable automation system before general-purpose digital computers were readily available. The term “numerical control” (NC) was coined for this approach. Notably, Parsons filed a patent in 1952 for this NC method (granted in 1958), and a commercial venture called Numerical Control Inc. was founded in 1952 by engineer John Runyon to start building NC equipment. This was one of the first private companies in the NC/CAM space. IBM also entered the scene in the 1950s, leveraging its computing expertise to build early NC controllers. In 1955, members of the MIT team left to form Concord Controls, which (with backing from machine tool builder Giddings & Lewis) produced a magnetic-tape NC controller called “Numericord” by 1955 - indicating how rapidly NC moved from lab to industry.

Global Pioneers: While the NC revolution was born in the USA, other countries soon followed. In the Soviet Union, research groups were keenly aware of U.S. advancements and began their own NC experiments in the 1950s. By the mid-1950s, the Soviets had reportedly developed a prototype NC milling machine using an open-loop positioning system (i.e. without feedback servos). This suggests that parallel efforts to automate machine tools were underway in the USSR, albeit with more primitive technology initially. Throughout the 1950s and 60s, Soviet engineers worked on NC controls and machine tools (often via state institutes known as “NII”s), seeing automation as crucial for military and industrial strength. In Britain, the 1950s likewise saw interest in NC - for example, researchers at Cambridge University and industry partners were exploring computerized machine control by the end of the decade. One British project developed an NC lathe control as early as 1959, and by 1962 the UK’s Royal Aircraft Establishment was experimenting with NC for manufacturing jet engine blades. In Japan, the seminal influence of NC is traced to 1955–56, when Fujitsu’s telecommunications lab (led by Dr. Seiuemon Inaba) began developing numerical control devices - work that gave rise to FANUC (Factory Automatic Numerical Control) in 1956. FANUC, initially a unit of Fujitsu, would become a world leader in CNC machine controls. By 1958, Japan had domestically produced its first NC milling machine, and Japanese firms were avidly importing American NC technology to learn from it. Germany also entered the fray: companies like Siemens began R&D on NC controllers in the 1950s, culminating in the Siemens Sinumerik controller introduced in 1960 - one of the first European NC control systems.

The early NC systems had significant limitations: they were extremely expensive and technically complex, relied on fragile electronics (vacuum tubes, relays) and primitive memory, and required highly skilled operators/programmers. A single NC machine in the 1950s filled a room with cabinets of circuitry, and programming was done in low-level codes specific to each machine. Yet, the promise was clear: NC could produce parts with accuracy and repeatability far beyond human operators, and could execute shapes like smooth curves that were “imprecise craft” before. It’s no surprise the U.S. Air Force continued to sponsor NC development – not only for technical gains but also, as one historian noted, to shift manufacturing “off the highly unionized factory floor and into the white-collar design office,” aligning with Cold War era pressures for efficiency and control.

The first NC milling machine, demonstrated at MIT in 1952. The large cabinets (left) contain vacuum-tube logic controlling the Cincinnati Hydro-Tel 3-axis mill (right). This prototype, funded by the U.S. Air Force, could mill complex contours by following instructions on punched tape – a milestone in computer-aided manufacturing.

From NC to CAM: Early Software and Language Advances

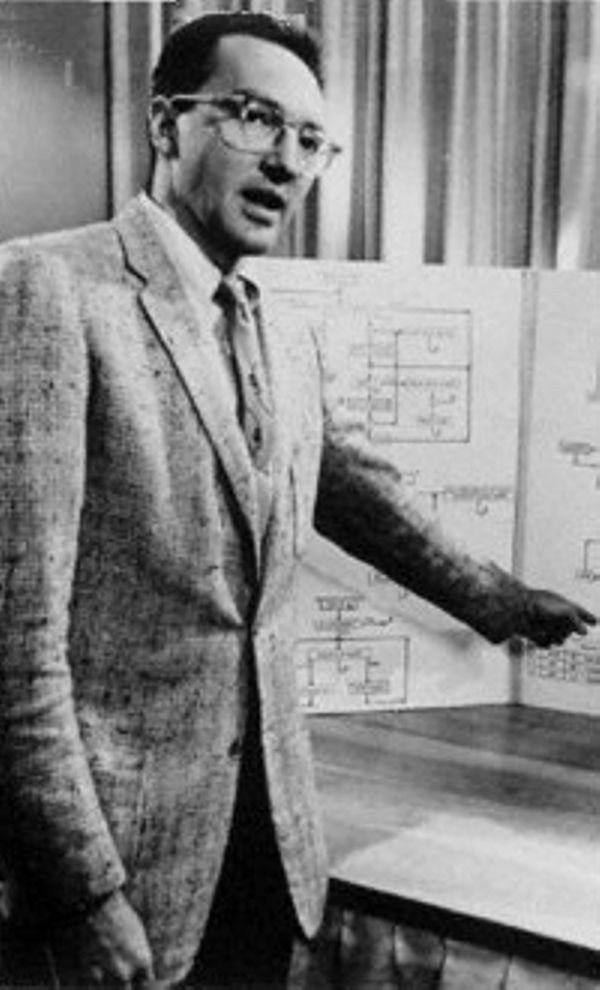

While the first NC machines were hardwired to follow coordinate inputs, the next breakthrough was creating software to generate those inputs - essentially the first CAM software. In 1956, MIT (with Air Force funding) launched the Automatically Programmed Tools (APT) project. Douglas T. Ross, head of MIT’s Computer Applications Group, led the development of APT: a high-level programming language that allowed operators to write machining instructions in a more human-readable form (using geometry and motion commands), which the computer would compile into low-level machine tool moves. APT greatly simplified programming of NC machines and became a de facto standard. By 1960, APT was successfully used to program complex parts; the language was released into the public domain and widely adopted across industry (including by companies like Boeing and General Electric). This marks one of the earliest true “CAM” software achievements – using a computer to aid manufacturing by automatically generating toolpath code.

Douglas T. Ross.

Another early software milestone came from industry: Dr. Patrick J. Hanratty, often called the “Father of CAD/CAM,” developed PRONTO (Program for Numerical Tooling Operations) at General Electric in 1957. PRONTO was an NC programming system that predated APT and ran on a mainframe, helping to create punched tape instructions. Hanratty went on to found Manufacturing Consulting Services (MCS) in 1971 and created a system called ADAM (Automated Drafting and Machining) – an interactive CAD/CAM software package. ADAM was innovative in that it combined drafting (CAD) with toolpath generation (CAM), and Hanratty’s business model was to license this core software to other vendors who would build their own applications on it. This approach was hugely influential – “70 percent of all 3-D mechanical CAD/CAM systems available today trace their roots back to Hanratty’s original code,” according to industry studies. In fact, ADAM’s code formed the basis for early versions of Unigraphics, a CAD/CAM system that eventually evolved (through corporate twists) into today’s Siemens NX. Thus, by the end of the 1950s, the pieces were in place: numerical control machines, programming languages like APT and proto-CAM software like PRONTO – setting the stage for CAM to blossom in the following decades.

Dr. Patrick J. Hanratty.

Institutions and Funding: It’s important to note the critical role of government and university labs in this era. In the U.S., the Air Force and later other branches (and agencies like ARPA) poured substantial investment into automation as a strategic technology. MIT’s NC and APT projects were direct outcomes of defense funding. So was the subsequent 1959–1965 MIT project on Computer-Aided Design (CAD), which sought to integrate design with manufacturing. That project produced the “DAC-1” system at General Motors (with IBM) - in 1963 DAC-1 managed to convert a 2D car design drawing into a machined 3D model (a trunk lid prototype), effectively closing the loop from computer design to computer-controlled manufacturing. This achievement at GM was an early demonstration of CAD/CAM convergence: by generating an NC tape from a digital design and cutting a prototype, they proved the feasibility of end-to-end computer-aided design and manufacturing.

Meanwhile, in the USSR, government ministries coordinated NC research through institutes like the Ministry of Machine Tool Building (Minstankoprom) and the Ministry of Instrument Making (Minpribor). By 1968, Soviet planners issued directives for nationwide development of NC machine tools. One prominent Soviet institute was NIIAS (Scientific Research Institute of Automated Systems), which, along with others, worked on indigenous CAM software and CNC controls throughout the 1960s–80s. The United Kingdom had the Cambridge University Engineering Department’s CAM group (sponsored by the Science Research Council and firms like Ford) under Professor Donald Welbourn, which by 1965 was investigating 3D computer modeling for manufacturing. This eventually led to the “Cambridge CAD/CAM” team that developed surface modeling techniques and later spun off into the company Delcam (founded formally in 1977). All these efforts show that by the late 1950s, multiple countries recognized the potential of computer-aided manufacturing and seeded programs to develop it – often linking universities, government labs, and private firms in early research consortia.

In summary, the 1950s delivered the proof of concept of CAM: automated control of machine tools was possible and beneficial. The USA pioneered the field with MIT’s NC machine and APT language, supported by Air Force vision and funding. Other nations were not far behind – the Soviets built prototype NC machines by the late ’50s, and the British, Germans, and Japanese all started cultivating NC technology heading into the 1960s. At this stage, “CAM” per se was not a common term (the acronym wouldn’t become popular until the 1970s), but the essential idea – using computers to aid manufacturing – was established in practice. The focus now would shift to refining these technologies, expanding their use, and connecting design (CAD) to manufacturing (CAM). This set the stage for an explosion of development in the 1960s and 1970s.

Expansion and Innovation in the Cold War Era (1960s–1970s)

By 1960, numerical control had moved from experimental to emerging industrial practice. The 1960s saw NC and early CAM technologies proliferate – especially in high-tech industries like aerospace – and also saw many new players (countries, companies, and research centers) contributing innovations. At the same time, this era introduced the transition from pure NC to computerized NC (CNC), as the advent of reliable computers and transistors allowed more direct computer control of machines. The Cold War competition between East and West, and the rapid growth of consumer industries, provided strong incentives for advancement. Below, we examine key developments in various regions and the milestones of the 1960s–70s.United States – Aerospace Leads the Way: Aerospace manufacturing remained a driving force for CAM in the 1960s. Military and NASA programs demanded ever more complex machined parts (for jets, missiles, and spacecraft), which NC made possible. For example, Boeing adopted NC to produce large wing skins and structural components for bombers and airliners in this period. It’s often cited that the Boeing B-52 Stratofortress bomber, introduced in the mid-1950s, benefited from NC in manufacturing its contoured airframe parts. The cultural context of the time – a “bomber gap” scare – meant automation was viewed as a strategic advantage in building aircraft. By the early 1960s, major U.S. aerospace companies (Lockheed, Northrop, Douglas, etc.) had NC machines in use, often programming them with APT. Notably, in 1968 Lockheed used an integrated CAD/CAM system (the aforementioned Control Data Digigraphics system) to design and fabricate parts of the C-5 Galaxy transport airplane. This is considered the first end-to-end CAD/CNC production: engineers created 3D digital models of certain C-5 parts and directly generated NC tool paths to produce them. Lockheed’s success validated the concept that complex products could be digitally designed and manufactured – a significant milestone for CAM in industry. Meanwhile, the U.S. automobile industry also began exploring CAM in the 1960s, though adoption was a bit slower than aerospace. General Motors had its DAC-1 CAD project (with IBM) which by 1963 could feed data to NC machines. Ford and others followed with their own CAD/CAM R&D. By 1970, the U.S. had a host of CAD/CAM firms and systems either in the market or in development: a partial list includes Intergraph (founded 1969), Applicon (1969), Computervision (1969), Auto-trol (1963), and United Computing (which developed UNIAPT in 1969, an early commercial NC programming system). Many of these companies started by offering NC programming software or graphic CAD tools for big engineering customers. For instance, Computervision’s CADDS system (Computer Augmented Design and Drafting) launched in the early 1970s and offered interactive graphics terminals to create designs and output NC data – a cutting-edge capability at the time. The U.S. Department of Defense also continued investing: in the late 1960s and 70s, the Air Force ran the ICAM (Integrated Computer-Aided Manufacturing) program to formalize CAD/CAM integration and funded developments like the IGES data exchange format (initiated in 1979 to allow different CAD/CAM systems to share geometry). NC and CAM were thus maturing under a combination of military support and private innovation.

Soviet Union – Centralized Efforts and Challenges: In the 1960s, the USSR massively expanded its NC machine tool programs. Under the Eighth Five-Year Plan (1966–1970), the Soviet government made series production of NC machine tools a priority. Two ministries – the Ministry of Aviation Industry and the Ministry of Machine Tool Building – were tasked with producing advanced NC machines for both military and civilian uses. A third body, Minpribor (Instrumentation and Control Systems), was responsible for developing the control equipment and electronics. This somewhat fragmented approach led to multiple parallel projects across various “NII” (research institutes) and factories. NIIAS (possibly an institute focusing on automated control systems) and others like NIIET (Electronics Technology) worked on indigenous NC controllers, while machine tool plants in Moscow, Leningrad, and Kiev built NC lathes and mills. By the late 1960s, the USSR was producing hundreds of NC machines domestically, but it also imported models from the West. In 1967, for example, the Soviets purchased 300 NC machine tools from a French company - likely to quickly acquire technology. Western export controls (COCOM restrictions) limited some high-tech sales, but countries like Switzerland, Austria, and even Japan supplied the USSR with advanced NC machining centers in the 1970s. An intelligence report noted that during “1976–81 Japan delivered 286 NC machining centers to the USSR,” indicating significant external sourcing.

The Soviet approach to CAM software was somewhat insular. They developed their own versions of APT and other programming languages (often in Russian language form) and their own CAD systems for tool design. One notable Soviet CAM system was created at NIIST (Scientific Research Institute of System Engineering) which developed an APT-like language and even experimented with graphical programming interfaces by the late 1970s. However, due to siloed development across different ministries, many incompatible NC/CAM systems existed in the USSR. Unlike the West – where companies could sell to various industries – Soviet solutions were often tailor-made for a specific sector (e.g. one system for aerospace vs. another for shipbuilding). Despite technical prowess, the USSR struggled with computer hardware (often lagging a generation behind) and relied on imported electronics for the most advanced CNC controllers. By the 1970s, Soviet commentators admitted that while NC tech was improving, it was “not pronounced” enough – for instance, as of 1971 the Soviets even licensed some NC machine designs from Japan’s Fujitsu (related to stepper motors and basic control components). In essence, during the 60s–70s the USSR made big strides in numeric control (especially in quantity – thousands of NC machines were in use by 1980), but qualitatively they often trailed Western advances in CAM/CNC software and microelectronics. The centralized, military-focused nature of their industry limited widespread commercial CAM innovation. We will later see how many Soviet-developed CAM systems did not survive the Cold War’s end, as Russia transitioned to using more international software in the 1990s.

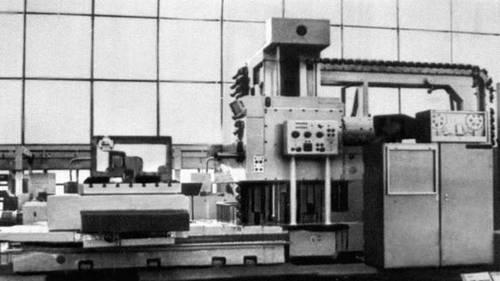

Soviet horizontal CNC drilling, milling, and boring machine with automatic tool changer. Model 2B622PMF2 (2A622F4).

Western Europe – Germany and the UK: In West Germany, the machine tool industry was determined not to fall behind. During the 1970s, German firms like Siemens, Heidenhain, and machine builders (Deckel, MAHO, etc.) aggressively developed CNC controls and CAM capabilities. By 1979, sales of German NC machine tools surpassed those of U.S. makers for the first time. German strategy focused on making NC applicable to a broader range of machines and prices. For example, Siemens’ Sinumerik control (first version 1960) evolved through the 60s and 70s to handle both high-end 5-axis machines and simpler lathes, capturing markets that American companies overlooked. This was helped by national initiatives and collaboration – the German government and industry had joint programs to advance NC, and by the late 70s their products were highly regarded. In 1980, Japan then overtook Germany and the U.S. in machine tool production, showing how competition was fierce.

The UK in the 1960s had a notable CAM hotspot in Cambridge. As mentioned, a Cambridge University team under Dr. Donald Welbourn pursued computer-aided pattern-making and surface modeling. This led to the development of the “DELTA” CAD software (for designing complex 3D surfaces) and later a system for generating machining instructions for molds and dies – early CAM for tooling. After some prototypes in the 1970s, this work gave rise to Delcam (first established as a project in 1977 and later spun off fully in 1989).

Dr. Donald Welbourn.

Delcam’s software (eventually known as PowerMILL, etc.) became a leading CAM solution for sculptured surfaces, showing the UK’s contribution in bridging design geometry and manufacturing. Another British effort was Cambridge Numerical Control (CNC), a company founded in 1981 by John Ball and Tim Collett. Cambridge NC focused on software for controlling and networking CNC machines (like DNC systems and custom post-processors). While not a global brand, it exemplifies the many smaller firms that sprouted to service the growing CNC market. Europe also launched collaborative R&D programs in this era - notably the EUREKA and ESPRIT initiatives in the late 1970s and early 1980s, where countries pooled resources to develop advanced manufacturing technologies, including CAD/CAM software and standards.

Japan – Rise of Precision Manufacturing: By the late 1970s, Japan’s investment in precision manufacturing and automation paid off spectacularly. Companies such as FANUC, Mitsubishi, and Yaskawa became dominant suppliers of CNC control systems and servomotors. FANUC in particular, which had delivered its first NC controller to Makino in 1958, grew through the 60s by partnering with machine tool builders. In 1972, FANUC introduced the Series 2000C, an all-transistorized CNC control that was highly reliable; by the end of the 70s, they were exporting CNC units worldwide. The Japanese government, via MITI (Ministry of International Trade and Industry), actively supported technology transfer and adoption – it encouraged companies to license Western patents and then improve upon them. Japanese manufacturers focused on cost-effective NC: they produced smaller, affordable CNC machines (like commodity milling machines and lathes) which undercut pricier American ones in many markets. This strategy succeeded such that by 1987, of the world’s top 10 machine tool builders, Japan held many spots and only one U.S. company (Cincinnati Milacron) remained on the list (and had fallen to rank 8). In terms of CAM software, Japanese firms initially used imported systems (some early CAD/CAM from companies like ComputerVision were used in Japanese automotive design), but they soon developed indigenous software. Toyota, for instance, introduced CAD/CAM systems in its production by the mid-1980s (Toyota’s history notes that by 1984–86 they had developed a personal-computer-based CAD/CAM system for automotive design and manufacturing).

Japanese machine tool companies also bundled programming software with their machines – e.g., Mazak and Okuma created conversational programming interfaces in the 1980s that simplified generating toolpaths on the shop floor. While not “CAM software” in the PC sense, these were important in enabling machinists to program CNCs without manual G-coding. The rise of Japanese excellence in both hardware (machines/controls) and process (manufacturing quality methods like Toyota’s) in the 70s–80s significantly impacted the global CAM landscape, forcing others to innovate or lose market share.

1968 - Developed the first Mazak NC lathe, MTC series.

Technological Strides in the 1960s–70s

During this period, technology advanced on multiple fronts, enabling CAM to progress:From NC to CNC: Early NC had no “computer” on the machine – just hardwired electronic controllers. In the 1960s, with the invention of the transistor and integrated circuits, it became feasible to include a digital computer in the machine tool control unit. This gave birth to CNC (Computer Numerical Control). Instead of just reading a tape, a CNC machine had a built-in computer that could store programs, perform calculations, and accept interactive input. By the late 1960s, CNC prototypes existed, and in the 1970s CNC systems became standard. A noted example is the retrofitting of NC machines with DEC PDP-8 minicomputers in the late 60s – these mini computers were dedicated to single machines, a huge innovation that made controls cheaper and more flexible. CNC dramatically improved the ease of editing programs and switching jobs, fueling wider adoption. As one account describes, “the integration of a computer into the control unit… opened up a completely new range of possibilities… machines became more flexible and could process complex command sequences. Programs could be saved and reused at will.” In short, CNC was an evolutionary leap that CAM software could leverage (since now the software could communicate with a computer on the machine, not just punch tape).

Interactive Graphics and 3D Modeling: The 60s saw the birth of interactive computer graphics – Ivan Sutherland’s Sketchpad in 1963 demonstrated graphical user interaction for design. By the end of the 60s, companies like Control Data (with Digigraphics) and IBM were offering graphical CAD systems. This was crucial for CAM, because to program a complex 3D part, it helps immensely to visualize it on screen and let the computer calculate toolpaths. In 1968, French engineer Pierre Bézier at Renault developed a pioneering 3D CAD/CAM system called UNISURF. UNISURF allowed designing automobile body surfaces with Bézier curves and then milling the dies for those panels – an example of early 3D CAM (Renault used it for car bodies in the 1970s). Likewise, the Cambridge (UK) team’s work on sculptured surface modeling was aiming to let 3D shapes be machined accurately. By the mid-1970s, 3D CAM for things like aircraft engine blades and automotive molds was becoming a reality in high-end sectors. APT language itself was extended to handle 3D tool motions and five-axis machines in this era (APT-III, etc.). The progression from strictly 2D/2.5D operations (simple profiles, holes) to true 3D surface machining was a major tech shift of the 1970s, setting the stage for modern multi-axis CAM.

The very beginning of the digital representation - Ivan Sutherland Sketchpad.

Multi-Axis and Adaptive Control: Early NC machines were typically 3-axis (X, Y, Z linear axes). In the 1960s, machines with additional axes (like rotary axes for tool orientation) were developed, especially for complex aerospace parts (e.g., impellers, turbine blades). By the 1970s, 5-axis CNC machines existed, though they were rare and expensive. CAM software had to evolve to support multi-axis trajectories. Government labs and companies collaborated on this; for example, the U.S. Air Force funded research into 5-axis milling strategies for complex “impossible shapes” as early as the 1970s. Another innovation was adaptive control – using sensor feedback (e.g., force, temperature) to let the CNC adjust cutting parameters in real time. NIST (then NBS) in the U.S. experimented with adaptive CNC systems in the late 70s, which was an early form of “smart” CAM where the program could respond to conditions. While adaptive control did not see widespread use until much later, it showed the increasing sophistication of CAM-related research in this era.

Broader Industrial Use: During the 1970s economic turbulence (oil shocks, etc.), manufacturers sought efficiency. NC/CAM offered a way to produce more with less labor, which was appealing when wages rose. However, a retrospective view suggests American firms were slow to adapt CAM to mass production needs, focusing instead on high-profit, low-volume applications (like aerospace). In contrast, German and Japanese firms applied NC to more routine manufacturing early on (for example, Japan automated production of consumer electronics molds and automotive parts). This “decentralization” of CAM – moving from specialty shops to general factories – was a key shift of the late 70s and early 80s. By 1980, one could find CNC machines not just at Boeing or NASA, but also on automotive engine lines, appliance factories, and job shops, particularly in Europe and Japan. This broad adoption was helped by simpler CAM software becoming available on minicomputers, and by training programs (the Society of Manufacturing Engineers and others started NC programming courses, etc., in the 70s).

In sum, the 1960s–70s period firmly established CAM as a critical manufacturing technology. NC had evolved into CNC, programming moved from punched tapes to computer terminals, and both 2D and 3D machining capabilities expanded greatly. The Cold War spurred significant government investment (on both sides), which accelerated innovation. By the end of the 1970s, the competitive balance in machine tool and CAM technology had shifted: the U.S. no longer held a monopoly. Germany and Japan emerged as CAM/CNC powerhouses, and the USSR, while less visible internationally, had built a large internal base of NC machines (though often a generation behind). The stage was set for the next act – the 1980s – which would introduce personal computing to CAM and witness the rise of many software companies, as well as consolidation of the pioneers.

The Rise of Commercial CAM Software and Workstations (1980s)

The 1980s were a transformative decade for CAM in machining. Three key trends defined this era: the migration of CAD/CAM to powerful workstations and personal computers, the introduction of user-friendly 3D CAM software for industry, and a wave of new companies and products entering the CAM market (with some older players declining or merging). By the end of the ’80s, CAM had moved from an esoteric, high-end tool to a more widely accessible technology, used in countless machine shops and by small manufacturers. Let’s explore the highlights of this period:Computing Revolution – From Mainframes to PCs: In the 1970s, most CAD/CAM systems ran on either mainframe computers or minicomputers (like DEC’s PDP-11 or VAX). These were expensive and required specialized terminals. Around 1980, the landscape shifted with the advent of the engineering workstation – companies like Sun, Apollo, DEC, and IBM made 32-bit graphics workstations that could sit in a department and run CAD/CAM software with impressive (for the time) interactive graphics. Simultaneously, the IBM PC (introduced 1981) and compatibles created an opening for smaller CAM software on personal computers.

A PDP–11/70 system that included two nine-track tape drives, two disk drives, a high speed line printer, a DECwriter dot-matrix keyboard printing terminal and a cathode ray tube terminal installed in a climate-controlled machine room.

By the mid-1980s, PC-based CAM software emerged that targeted smaller machine shops who couldn’t afford a $100k workstation. A notable example is Mastercam, first released in 1983 by CNC Software Inc., which ran on DOS PCs. Mastercam provided 2D and 3D CAM capabilities at a fraction of the cost of mainframe systems, quickly gaining popularity among job shops and toolmakers. Other PC CAM programs of the 80s included SmartCAM (by Point Control, 1985) and GibbsCAM (1984, by Bill Gibbs). These offered graphical toolpath programming on a standard PC. Meanwhile, high-end systems like Unigraphics, CATIA, and ComputerVision CADDS migrated from proprietary hardware to UNIX workstations, greatly improving performance and reducing cost per seat. For instance, Unigraphics (originally from McDonnell Douglas) was ported to the DEC VAX and later to Apollo/SGI workstations in the 1980s, and Dassault Systemes’ CATIA (which started on IBM mainframes in late 70s for aircraft design) also moved to IBM UNIX machines by 1986. This democratization of computing meant CAM could reach many more users and departments.

Apollo dn330.

Major CAM Software Players of the 1980s: A number of companies came to dominate CAM software in this decade, either as independent vendors or as part of CAD/CAM suites:

- ComputerVision (CV): An early CAD/CAM pioneer, CV’s CADDS software was widely used in automotive and aerospace. CV introduced 3D CAM capabilities (surface machining, multi-axis) in its CADDS3 and CADDS4 systems. By the late 80s, however, CV was struggling financially and would later be acquired (by Parametric Technology Corp in 1998).

- Dassault Systèmes: Makers of CATIA, which by version 3 (mid-80s) included an advanced CAM module. Boeing adopted CATIA for the 777 in the late 80s, including its manufacturing component, signaling trust in a fully integrated CAD/CAM. CATIA originated in France (with heavy IBM partnership) and became a standard in aerospace.

- Unigraphics (UG): Initially developed by United Computing/McDonnell Douglas, UG was a high-end CAD/CAM system known for strong CAM for complex 3D parts. It had its roots in the aforementioned ADAM code from Dr. Hanratty and in the 1980s was used by companies like General Motors and General Electric. In 1984, McDonnell Douglas acquired the company that made Unigraphics, and in 1991 it was sold to EDS/GM.

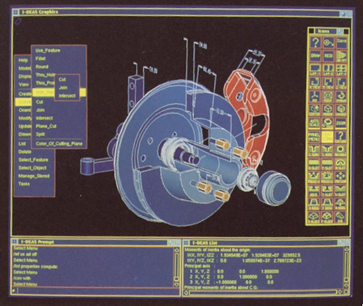

- SDRC (Structural Dynamics Research Corp): Offered I-DEAS, a CAD/CAM/CAE system released in 1982. I-DEAS had solid modeling and integrated CAM for manufacturing. SDRC was an independent company until merging with UG in 2001.

- PTC (Parametric Technology Corporation): Founded 1985, PTC shook up the CAD world with Pro/ENGINEER (1988) – the first parametric, feature-based solid modeling CAD. While PTC’s focus was CAD, they did introduce a CAM module (Pro/Manufacturing) to generate toolpaths from the solid model. The parametric approach meant toolpaths could update automatically if the design changed – a big advantage in concurrent engineering. By heralding “feature-based modeling” and parametric links, Pro/E influenced all CAD/CAM, forcing others to adopt parametric techniques in the 90s.

- CNC Software (Mastercam): As mentioned, Mastercam targeted the low-mid market with a PC-based system. By 1987 it added 3D surfacing and became one of the most popular CAM programs by seat count.

- Others: ESPRIT by DP Technology (1982) was another independent CAM software focusing on CNC programming ease; Cimatron (Israel, founded 1982) specialized in toolmaking CAM; Delcam (UK) formally launched its commercial PowerMILL in late 1980s for complex 3D machining, after being an R&D project earlier; Schlumberger (a tech conglomerate) acquired Applicon and later introduced Bravo3 CAD/CAM in 1988; and Intergraph released EMS (Engineering Modeling System) which had manufacturing modules.

The 1980s also saw some consolidation and exits: for example, Control Data Corporation (CDC), which had been active with Digigraphics, pivoted away from CAD/CAM to other software (and by late 80s, CDC exited the CAD market). Auto-trol and Calma (another early CAD company) likewise faded or refocused by decade’s end. A telling statistic is that in 1982, there were dozens of CAD/CAM software firms, but by 1990, many had merged or been acquired as the market matured.

Typical I-DEAS screen image.

Advances in Capability - The functionality of CAM software greatly improved in the ’80s:

- 3D Solid-Based CAM: With the rise of solid modeling (led by PTC Pro/ENGINEER’s debut in 1987, CAM systems increasingly could take a solid model and automatically generate toolpaths. This reduced the tedious task of defining geometry for machining – the software could derive cutter paths directly from the CAD model. By the end of the decade, systems like Catia, Unigraphics, and I-DEAS offered solid/surface hybrid modeling with CAM.

- Feature-based Machining: Building on the parametric idea, researchers began developing feature recognition – the software identifies features like holes, pockets, bosses on the CAD model and suggests machining processes. An EU project in late 80s called COMBI (Computer-Integrated Manufacturing of Mechanical Parts) worked on this, and commercial early feature-based CAM tools started appearing in the early 90s.

- Graphical Simulation: CAM software in the 80s started including graphical simulation of machining – showing a 3D view of the cutting tool and the material removal. This helped users verify programs and avoid collisions. For instance, Mastercam and others had at least rudimentary toolpath backplot and verification by the late 80s.

- Multi-Axis Mastery: What was cutting-edge in the 70s (5-axis milling) became more routine in the 80s for high-end industries. CAM software evolved to support 4-axis (e.g., rotary 4th axis for mills or multi-axis lathes) and full 5-axis continuous machining. Software like CATIA and UG were used to program 5-axis milling for complex aerospace parts (blisks, impellers). In 1989, a German company that would later become Open Mind began developing advanced multi-axis toolpath algorithms, leading to its hyperMILL software in the ’90s – an indicator of specialized multi-axis CAM focus in Europe.

- Integration and Data Exchange: The concept of CAD/CAM integration became standard. It was no longer acceptable to have a “wall” between design and manufacturing – clients wanted seamless integration. Standards like IGES (Initial Graphics Exchange Specification, 1980) and later STEP were created so that models could be transferred between systems. For example, a model designed in CATIA could be exported via IGES to a standalone CAM like Mastercam for programming. By late 80s, IGES was widely supported, easing data flow and thus CAM adoption (since CAM software could work with models from any CAD system).

- Human Interfaces: Early CAM had cryptic, text-based interfaces. In the 80s, user interfaces became more visual. The advent of bit-mapped graphics and GUI (think Apple Macintosh in 1984, Windows in late 80s) influenced CAD/CAM UI design. While most CAD/CAM ran on UNIX workstations with proprietary GUIs, there was a clear trend to make programming more interactive – using menus, icons, and even lightpens or tablets for input. The result: programming a part in 1988 was far easier than in 1978, requiring less specialized knowledge of G-codes and more intuitive human interaction.

- Aerospace: Remained at the forefront – e.g., the development of the Boeing 777 in late 80s was the first aircraft 100% digitally designed and manufactured (using CATIA for both), no paper drawings. Military programs (like stealth aircraft) also pushed CAM for complex composites and metal cutting. The U.S. Strategic Defense Initiative (SDI) in the 80s funded some advanced manufacturing R&D.

- Automotive: By the mid-80s, all major car manufacturers had CAD/CAM in their product development. Japanese automakers like Toyota led in applying CAM for high-precision machining of engine components and molds, contributing to their famed quality. Toyota’s records show introduction of CAD/CAM around 1984–86 in their production engineering. In the West, companies like Ford and GM invested in large CAD/CAM installations (GM partnered with EDS and ultimately bought into Unigraphics; Ford worked with Computervision and CATIA).

- Tool and Die/Mold Making: This industry perhaps saw one of the biggest transformations. The shapes of injection molds and stamping dies are complex free-form surfaces. In the 80s, CAM software (like CV, CATIA, or Delcam’s offerings) started to handle these well. Instead of handcrafted die making, companies could machine electrode patterns or dies directly from CAD models. This drastically cut lead times in consumer product manufacturing.

- General Manufacturing: Thousands of CNC mills and lathes were sold to general manufacturing in the 80s, and many were programmed with CAM software offline. Smaller job shops often started with 2D CAM (for profiles, drill patterns), gradually moving to 3D as they invested in better tools. The availability of affordable PC-based CAM was key here. By 1989, even a 5-person machine shop could realistically own a 286-PC running Mastercam or SmartCAM to program their parts – something unthinkable a decade prior.

Case of Pioneers – Fate by late ’80s: Some early pioneers like Numerical Control Inc. (the 1952 firm) had long since disappeared or been subsumed as NC became mainstream. IBM, which helped in the 60s (with DAC-1 and others), stepped back from directly making CAM software, instead partnering (IBM became the global reseller of CATIA). Control Data left the CAD/CAM field to focus on its core computing business. NIST (National Institute of Standards and Technology, renamed from NBS in 1988) continued contributing in behind-the-scenes ways – for example, NIST released the Enhanced Machine Controller (EMC) in 1989, an open-source CNC control that eventually became today’s LinuxCNC. That project, while aimed at small machines, influenced the hobby and education space. On the other hand, companies like FANUC thrived through the 80s – by supplying controls to a majority of the world’s CNC machines, FANUC indirectly set standards that CAM software had to meet (i.e. everyone had to output G-code compatible with FANUC controllers, which became a de facto standard syntax).

Overall, the 1980s ended with CAM technology both advanced and widely diffused. Many brands had come and some gone. Computervision – once dominating turnkey CAD/CAM in the 70s – was facing decline, eventually to be acquired in 1998. Cambridge NC in the UK pivoted to niche solutions and training rather than mass-market CAM. Meanwhile, survivors like Mastercam (which continues to this day) and Unigraphics (later NX) solidified their positions. The competitive landscape set up in the 80s foreshadowed the consolidations of the 90s, where the CAM industry would see mergers and the influence of even larger tech players.

Consolidation, Windows, and Globalization (1990s)

In the 1990s, the CAM software industry underwent significant consolidation and adaptation. Many of the pioneering companies were acquired or merged into larger organizations, new dominant players emerged, and the technology itself migrated onto the Microsoft Windows platform as PCs and Windows NT became powerful enough for CAD/CAM. Additionally, geopolitical changes – notably the end of the Cold War with the breakup of the USSR in 1991 – created new markets and new competition. By the end of the 90s, the CAM market had coalesced around a smaller set of major suites (often part of broader CAD/CAM/PLM packages) and a few independent stalwarts.Key features of the 1990s CAM landscape

Mergers and Acquisitions: The decade saw the creation of several CAD/CAM behemoths- Unigraphics + I-DEAS → UGS: In 1991, EDS (Electronic Data Systems, owned by GM at the time) acquired the Unigraphics division from McDonnell Douglas. This made Unigraphics (UG) the core of EDS’s PLM software business. Later, in 1998, EDS (which had also acquired a CAM company called Engineering Animation, Inc. and others) went on to purchase SDRC, the maker of I-DEAS. By 2001, EDS merged Unigraphics and I-DEAS into one unit, eventually named UGS. The combined product would later be called NX. This lineage is important: NX traces back to 1960s Unigraphics (and thus to Hanratty’s ADAM code) and absorbed SDRC’s capabilities. Today’s NX is effectively a continuation of those earlier CAM systems, making it one of the longest-lived CAM brands (surviving under different owners from GM/EDS to UGS to Siemens).

- Dassault and IBM alliances: IBM, which had been reselling CATIA, deepened its partnership with Dassault Systemes. While not an acquisition, IBM’s global reach meant CATIA (with its CAM modules) became widespread in automotive and aerospace.

- PTC acquisitions: Parametric Technology Corp rose fast with Pro/E. In 1998, PTC acquired Computervision, the struggling pioneer, thereby getting CV’s CADDS and MEDUSA systems. PTC mainly wanted CV’s customer base and technology. Computervision’s CAM products were eventually phased out or integrated. By acquiring CV, PTC effectively took one of the last big independent CAD/CAM players off the market.

- EDS/UGS to Siemens: Though this happened in 2007 (just outside the 90s), it was set up in the late 90s – UGS was spun off from EDS and later sold to Siemens. This placed Unigraphics/NX under a major industrial conglomerate’s wing, ensuring its longevity.

- Autodesk enters CAM (later): In the 90s, Autodesk (known for AutoCAD) was actually not a CAM player. They focused on CAD for architecture and mid-range MCAD (Mechanical Desktop). It wasn’t until the mid-2000s and 2010s that Autodesk aggressively entered CAM by acquiring Delcam (2014) and others. But Autodesk’s presence in CAD did influence CAM indirectly – as many machine shops used AutoCAD for 2D drawings and then third-party CAM for machining.

- Others: Cimatron acquired GibbsCAM in 1998 (creating a combined CAM portfolio), Matra Datavision (French) sold its Euclid CAD/CAM to Dassault in late 90s, and Intergraph spun off its CAD/CAM to focus on process/plant design, eventually that unit went to Bentley or Hexagon. Camax (a CAM company known for the CAMAND software) merged with Gibbs in 1995, then was acquired by SDRC. Tecnomatix emerged (in Israel) focusing on manufacturing process simulation and later bought by UGS. So, by 2000, a lot of logos from the 80s had disappeared into larger entities.

Windows and the Demise of Proprietary Workstations: A major technology shift was the move to Windows-based PCs. In the early 90s, UNIX workstations (Silicon Graphics, HP, IBM RS/6000, etc.) still ruled high-end CAD/CAM. But with the arrival of Windows NT and improved Intel processors (Pentium Pro, etc.), software vendors began porting to Windows. By late 90s, most CAD/CAM software had a Windows version if not exclusively Windows. For example, Unigraphics released an NT version in the mid-90s; CATIA V5 (released 1998) was a rewrite that ran on Windows; PTC Pro/E moved to Windows as well. The user community demanded the lower cost and uniformity of PC hardware. This transition was not without challenges – performance and graphics had to catch up – but by 2000, UNIX-based CAD/CAM was declining. One effect of this was the standardization of GUI: software adopted more Windows-like interfaces, making them easier to learn. It also squeezed out some smaller players who couldn’t afford to rewrite code for a new OS. On the flip side, it allowed new entrants from the PC world to grow (Mastercam and others thrived in Windows; SolidCAM and CAMWorks appeared in late 90s integrated with SolidWorks, riding the Windows-based solid modeling wave). The Windows era also facilitated integration – e.g., using OLE/COM technology to link CAD and CAM, and easier file management in Windows networks.

Fate of Soviet CAM: After the Soviet Union collapsed in 1991, many Russian industries opened to Western software. The indigenous Soviet CAM systems often could not compete with the polished products from the West and Japan. Many Russian manufacturing enterprises in the 90s adopted software like Mastercam, Unigraphics, or CATIA for their newfound need to interface with global contractors. Some former Soviet CAD/CAM developers formed companies or joined Western firms. For instance, Russian experts contributed to the development of T-FLEX CAD and SprutCAM (a Russian CAM software that emerged in late 90s). However, institutes like NIIAS lost government funding and had to either convert to commercial consultancy or shut down. In essence, the breakup of the USSR largely spelled the end (or drastic reduction) of unique Soviet CAM product lines – a few may have merged into Russian IT companies, but globally they left little trace. Conversely, Eastern Europe became a new market for Western CAM vendors in the 90s.

Rise of Asia: While Japan was already a leader in CNC hardware, by the 90s other Asian players started contributing in CAM software. For example, South Korea and Taiwan began developing or customizing CAM software for their toolmaking industries. However, the most notable was that Japanese machine tool companies started bundling more sophisticated CAM-like software with their equipment (Mazak’s Mazatrol, Fanuc’s programming tools, etc., became very user-friendly). Additionally, Japan’s Hitachi had a product called Hitachi CAM in the 90s used internally and by some suppliers. Asia also became the manufacturing center of the world in this decade, meaning the volume of CNC machines (and therefore CAM programming needs) exploded. Yet, much of the CAM software used in Asia was still from US/Europe or Japan’s Fanuc-level tools. It wouldn’t be until the 2000s that China, for instance, would create notable CAM software of its own.

Parametric and Integrated CAD/CAM/CAE: By the late 90s, the concept of Product Lifecycle Management (PLM) emerged – integrating CAD, CAM, and CAE (analysis) with data management. Companies like EDS (with UGS), Dassault, and PTC repositioned themselves as PLM solution providers rather than just CAD or CAM vendors. This meant that CAM was often sold as a module of a larger suite. For high-end users, the appeal was seamless data flow: design a part, run an FEA simulation, and generate the CNC program all in one environment with consistent data. The associativity introduced by parametric modeling allowed, for example, a change in a 3D model to automatically update the machining operations attached to it. This was a big productivity gain and a selling point of integrated systems like Pro/E, CATIA, and NX. At the same time, standalone CAM vendors continued to innovate in their niches (e.g., Open Mind’s hyperMILL launched in the mid-90s focusing on high-end 5-axis machining strategies and surface finishing, and it persists today as a top-tier CAM for complex milling).

Notable Brands: Disappearances vs. Survivals: Summarizing some outcomes by around 2000

Disappeared or Absorbed: Computervision (into PTC), Applicon (into UGS via various owners), Calma (into Cadence for PCB design), Numerical Control Inc. (1950s pioneer, long gone), Concord/Autonumerics (early NC companies gone), Cambridge NC (the company still existed but as a service provider, its name not widely known outside the UK), Micro Engineering Solutions (makers of SmartCAM, got sold to EMS and then to Camsoft). Also, many smaller CAM tools either ceased or got acquired in the CAM consolidation wave around 2000.Survivors and Evolvers: Mastercam – continued as an independent family-run company (CNC Software) through the 90s and 2000s, growing to reportedly the highest number of installed seats. It survived by focusing on ease-of-use and mid-market and is now (2020s) owned by Sandvik but still essentially Mastercam. hyperMILL – founded in 1994 (Munich, Germany) by Open Mind, it carved out a strong reputation in 5-axis and remains a key CAM product (now often used alongside big CAD systems). NX – as discussed, is the lineal descendant of Unigraphics and maintained its presence, especially after Siemens’ acquisition gave it stability. CATIA – survived and thrived under Dassault, with CAM as part of its portfolio (particularly in aerospace and automotive mold/die). PTC – interestingly, PTC’s Pro/ENGINEER (now Creo) was very successful in CAD but its integrated CAM (Pro/Manufacturing) never dominated the CAM market; many Pro/E users still preferred dedicated CAM software. Over time, PTC somewhat de-emphasized CAM, focusing on CAD and PLM, which left Creo’s CAM module less prominent. This illustrates that not all integrated CAM overtook specialized CAM – the latter retained strong positions. ESPRIT, GibbsCAM, CAMWorks, EdgeCAM, SurfCAM – these mid-tier CAM products survived the 90s as well, often by focusing on CNC lathe programming, mill-turn, or being integrated with specific CAD systems. Some of them would later be acquired by larger metrology or tooling companies in the 2000s (e.g., CamWorks integrated with SolidWorks, GibbsCAM later bought by 3D Systems, etc.).

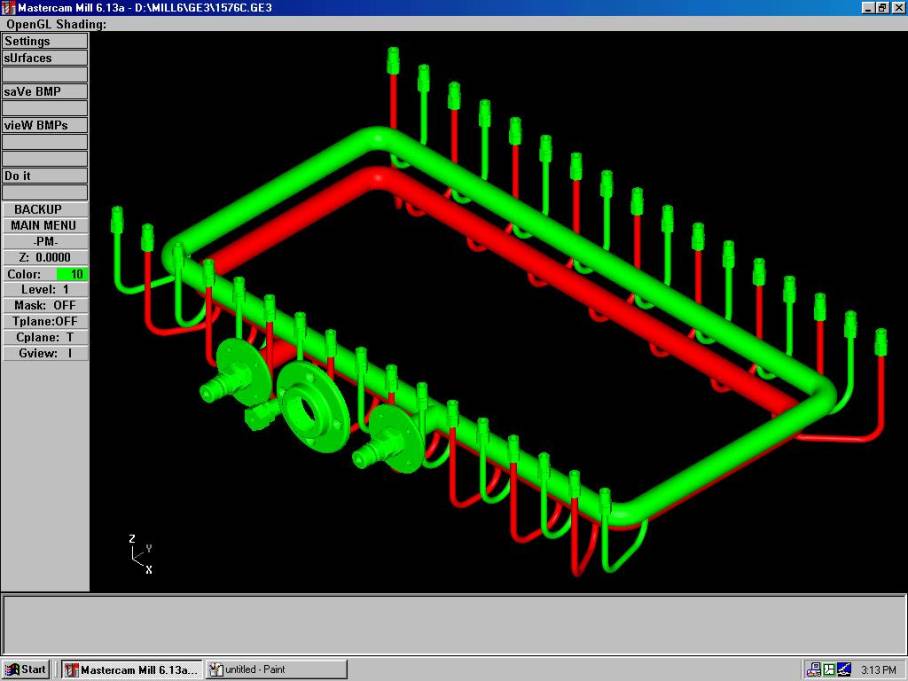

Interface of one of the early versions of Mastercam.

By the end of the 1990s, CAM’s industrial role was deeply entrenched. In aerospace, no airframe or engine was built without CAM; in automotive, every major manufacturer had fully digital processes for going from CAD to CAM to CNC on the factory floor (leading to shorter model cycles and higher quality). The tooling industry was largely CAD/CAM-based – the old craft of manual toolmaking had largely yielded to CNC machining of dies. The economic conditions of the 90s – globalization and offshoring – also meant that CAM had to be adopted to remain competitive; companies in high-wage countries automated heavily (with CAM and CNC) to compete with low-cost labor elsewhere, and those in low-wage countries adopted CAM to quickly acquire advanced manufacturing capabilities.

The geopolitical impact of the Cold War’s end also meant defense cutbacks in the West, which pushed many aerospace/defense contractors to diversify or merge (e.g., Lockheed and Martin, etc.). Those that remained placed even more emphasis on efficiency – again benefiting CAM software usage to reduce waste and cycle time. Additionally, global collaboration (for example, a car designed in Germany might be manufactured in the US or vice versa) meant CAM data had to be portable and standardized, which accelerated adoption of standards like STEP (Standard for the Exchange of Product model data) in the late 90s as an improvement over IGES. The EU funded projects to enhance such standards and improve pan-European digital manufacturing compatibility, reflecting the economic importance of CAM in international trade. As the 90s closed, CAM systems were poised to enter the next frontier: the Internet age and globalization of software. Companies started exploring web-based collaboration for manufacturing, and concepts like “factory of the future” were in vogue (with CAM as a central component). Little did they know, the 2000s and 2010s would bring cloud computing and further shake-ups, which we will address next.

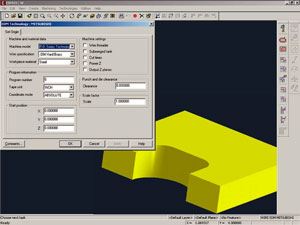

ESPRIT/W 4.0 Interface: Wire EDM CAM Software (Year 2000)

In conclusion, the journey of CAM systems for machining from the 1950s to today is a remarkable story of innovation, interdisciplinary collaboration, and adaptation to changing needs. It started as a military-sponsored experiment to machine aircraft parts with unprecedented accuracy, which then morphed into a broad industrial movement spanning the globe. Countries like the USA and USSR poured resources into automation to gain a strategic edge; companies like IBM, Control Data, and later Dassault and EDS turned these technologies into products; entrepreneurs like Hanratty and engineers like Bézier introduced concepts that fundamentally changed design and manufacturing. Along the way, we saw names rise and fall – from Computervision’s dominance and disappearance to Cambridge Numerical Control’s niche success and Mastercam’s steady growth – and we saw technologies evolve from punched tape to interactive graphics to cloud computing. The impact on industry cannot be overstated: CAM enabled the mass production of complex goods with consistency and quality that handcrafted methods could never achieve, fueling advances in aerospace, automotive, consumer electronics, and beyond.

As we reflect on this timeline, one quote from a recent editorial encapsulates the enduring legacy of CAM’s pioneers: “An interesting factoid about CAD/CAM is that 70% of all 3D mechanical CAD/CAM systems can trace their roots to CAD pioneer Patrick Hanratty’s original code." The fact that so much of modern manufacturing software traces back to early work underscores how foundational those mid-20th-century breakthroughs were. CAM has indeed come a long way – yet it remains rooted in the ideas of automation, precision, and integration first conceived in the 1950s. And as CAM now enters the age of cloud and AI, it continues to build on the rich history we’ve explored, ensuring that the machines that make our world are as advanced as the products they create.

Towards the 21st Century: Cloud CAM and Modern Trends (2000s–2020s)

Entering the 2000s, CAM technology matured and became an assumed part of manufacturing – something taken as a given in modern machine shops. Some key modern trends include:- Cloud-Based CAM: With increasing internet speeds and cloud computing, companies have started offering CAM solutions that leverage the cloud. For example, Autodesk Fusion 360 (mid-2010s) provides an integrated CAD/CAM platform where the heavy computation of toolpaths can be done on cloud servers, and collaboration (sharing CNC programs, version control) is cloud-enabled. This is somewhat a return to centralized computing (reminiscent of the mainframe days, but now the “mainframe” is a cloud data center). It allows even small workshops to access high-end CAM capabilities without needing a powerful local workstation, just an internet connection. Other cloud initiatives include online toolpath generators and platforms that integrate CAD/CAM with e-commerce (e.g., Xometry’s online machining service uses behind-the-scenes CAM to quote and program parts on the fly). The public cloud market is huge – projected over $1 trillion by 2026 – and major CAD/CAM players (Siemens, Dassault, Autodesk, PTC) are all increasing cloud-based deployments.

- Continued Consolidation: The 2010s saw even more consolidation: Siemens acquired UGS (NX), Autodesk acquired Delcam (PowerMILL, FeatureCAM), 3D Systems acquired Cimatron (with GibbsCAM), Hexagon AB acquired Vero Software (which had brands like EdgeCAM, SurfCAM) and DP Technology (ESPRIT). Essentially, the CAM market coalesced under a few corporate umbrellas: Siemens (NX CAM, CAMExpress), Dassault Systèmes (CATIA and integrated CAM in SolidWorks via CAMWorks), Autodesk (PowerMill, FeatureCAM, Fusion 360 CAM, HSMWorks), Hexagon (which owns ESPRIT and others as of 2020), and PTC (though PTC is less CAM-focused). Despite this, independent players like Open Mind (hyperMILL) and Mastercam have remained strong by focusing on technological excellence in toolpath strategies and user base loyalty.

- Advanced Technologies: Modern CAM must handle additive manufacturing (3D printing) toolpaths, robotics path planning, and incorporate artificial intelligence for optimizing machining strategies. While these are beyond the scope of historical discussion, they tie back to earlier innovations. For instance, adaptive control research from the 70s lives on in today’s AI-driven feed rate optimization features in CAM software. The parametric and feature-based groundwork of the 80s/90s now enables automation like generative design-to-CAM workflows.

- Survival of Legacy Brands: It’s interesting that some brands from the 1980s and 90s are still recognizable today, albeit under new ownership. Mastercam is still a leading CAM package in 2025 (now boasting over 280,000 seats). CATIA and NX are mainstays for high-end manufacturing. FANUC controllers still run a huge percentage of CNC machines worldwide, and thus the G-code dialect that CAM systems output remains essentially the one developed in the 60s (RS-274D, known as G-code). This continuity shows how foundational the early CAM developments were – even as software adds flashy new features, the core concepts (geometry, motion control, code output) persist from decades past. In a very tangible way, today’s CAM engineer stands on the shoulders of giants: Parsons, Ross, Hanratty, Bézier, and many others who pioneered the ideas.

In conclusion, the journey of CAM systems for machining from the 1950s to today is a remarkable story of innovation, interdisciplinary collaboration, and adaptation to changing needs. It started as a military-sponsored experiment to machine aircraft parts with unprecedented accuracy, which then morphed into a broad industrial movement spanning the globe. Countries like the USA and USSR poured resources into automation to gain a strategic edge; companies like IBM, Control Data, and later Dassault and EDS turned these technologies into products; entrepreneurs like Hanratty and engineers like Bézier introduced concepts that fundamentally changed design and manufacturing. Along the way, we saw names rise and fall – from Computervision’s dominance and disappearance to Cambridge Numerical Control’s niche success and Mastercam’s steady growth – and we saw technologies evolve from punched tape to interactive graphics to cloud computing. The impact on industry cannot be overstated: CAM enabled the mass production of complex goods with consistency and quality that handcrafted methods could never achieve, fueling advances in aerospace, automotive, consumer electronics, and beyond.

As we reflect on this timeline, one quote from a recent editorial encapsulates the enduring legacy of CAM’s pioneers: “An interesting factoid about CAD/CAM is that 70% of all 3D mechanical CAD/CAM systems can trace their roots to CAD pioneer Patrick Hanratty’s original code." The fact that so much of modern manufacturing software traces back to early work underscores how foundational those mid-20th-century breakthroughs were. CAM has indeed come a long way – yet it remains rooted in the ideas of automation, precision, and integration first conceived in the 1950s. And as CAM now enters the age of cloud and AI, it continues to build on the rich history we’ve explored, ensuring that the machines that make our world are as advanced as the products they create.

Sources:

- J. T. Parsons and F. L. Stulen’s early NC developments and MIT’s first NC machine

- Society of Manufacturing Engineers on the significance of NC (the “second industrial revolution”)

- CIA report on Soviet NC machine tools (Soviet prototype NC by mid-1950s)

- MIT and Air Force CAD project (DAC-1) enabling design-to-NC manufacturing in 1963

- Wikipedia: Lockheed’s use of CAD/CNC for C-5 Galaxy – first end-to-end CAM production

- Wikipedia: By 1970, many CAD/CAM firms existed (Intergraph, Computervision, UGS, etc.)

- History of numerical control: German and Japanese ascendancy in late 1970s

- Toyota’s 75-year history (introduction of CAD/CAM in mid-1980s)

- Rehana Begg, Machine Design (2024) – noting 70% of CAD/CAM roots from Hanratty, and ADAM’s licensing model

- Rehana Begg, Machine Design (2024) – noting ADAM formed basis of Unigraphics (now Siemens NX)

- Mastercam’s background and continued prominence (REDDIT.COM)

- Parametric CAD milestone: Pro/ENGINEER in 1987 and feature-based modeling